Citizen engagement is always a top priority

for governments, and a high

level of citizen engagement is considered to be an indicator of a developed

country.

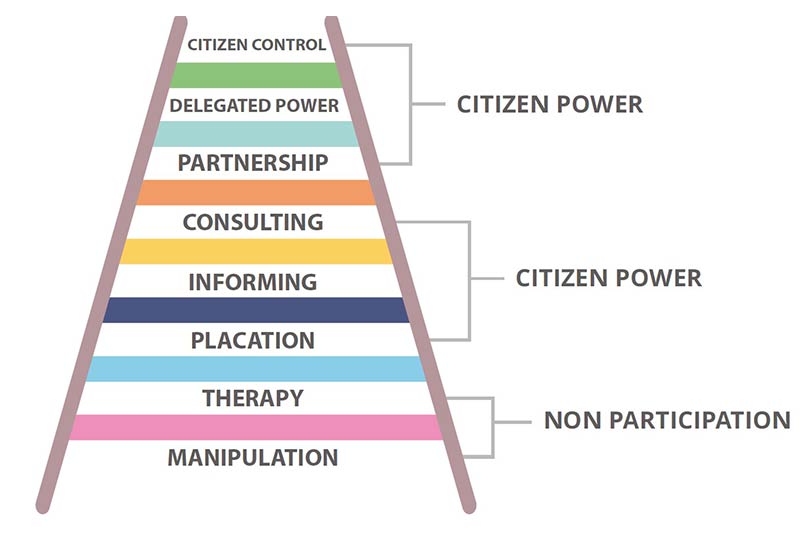

The ladder of participation introduced

by Sherry R. Arnstein in 1969 shows three different zones of citizen

participation. The two bottom rungs describe a zone of non-participation where

governments are manipulating different ways to cure and educate participants instead

of enabling and empowering citizens to participate.

The second zone is where governments

allows citizens to have a voice and be heard. Informing and consultation are the

rungs where governments inform citizens about their decisions and directions

and request consultation from powerholders and citizens. Here a voice is heard

but with no muscles, with no real change or right to decide.

The third zone represent the highest level

of power, where the relationship between governments and citizens is more of a partnership and

the level of citizen control is developed with increased degrees of

decision-making.

Ladder of citizen engagement

Governments around the world have experienced

one or more of the stages above; from informing to empowering; from providing

citizens with objective information on the government plans to the highest

possible level of customer engagement where the government opens all doors to

hear customers’ voices (suggestions and complaints) that can drive the change.

Crowdsourcing is an effective tool

for citizen participation. It first appeared as a business practice in which an

activity is outsourced to the end customers or the crowd. The word crowdsourcing

also reflects efficiency by involving a low cost solution, the customer

centricity by involving large numbers of people and the fact that it has a benefit as a business model.

Crowdsourcing is a type of smart/online

activity in which an individual, organisation or a private business proposes to

a group of individuals of varying knowledge and different interests, through a call

to the contact center, a text message, or even a photo or a video. It is

completely a voluntary work of undertaking a certain task.

Crowdsourcing is a practice that

should complement the efforts of building smart cities. It is a tool that ensures

services are provided in a satisfactory manner and the element of smartness with both citizens and

cities.

Collecting customer feedbacks via traditional methods,

including websites, long emails and phone calls, is no longer relevant to our

smart era nor convenient to the smart customer. Rather, social media, WhatsAPP,

twitter and Facebook became more convenient channels for customers and not for governments.

Most Crowdsourcing solutions include the following

four steps:

1. Providing

a mobile application (customised to meet different needs and scenarios) to

gather information (customers’ complaints or feedback) from individuals and public

or private parties.

2. To

properly solve the problem in a very systematic manner, the application will be

equipped with tools to identify the location and assign the issue to the

concerned department.

3. The

concerned department will take a corrective action and address the issue within

a well-defined timeline.

4. Informing

the end user (the customer) with the update and sustain customer satisfaction.

Crowdsensing = Crowdsourcing + Analytics + IoT.

Crowdsensing is simply the next generation of

Crowdsourcing, where you add two more components to the above-mentioned steps in

order to back your solution with the Analytics arm and the IoT flavor.

5. Use the power of data to analyse the customer voice with other complaints from the same location and correlate customer demographic information with customer insights.

6. To sustain the solution and maintain proactivity, innovative IoT solutions are used to monitor the location and sustain the solution.