In response to the recent global events that are causing consumer shifts, many organisations are accelerating their digital transformation efforts. Digital transformation has gained importance and is perceived as a strategy for both survival and growth in the new normal. This has increased the need to use innovative technologies to create new business models, products, or services.

As the decades-old IT systems, responsible for running traditional workloads, look to modernise, there is still a need — as there always has been — for reliable, scalable and secure infrastructure. One technology that is both synonymous and non-negotiable with such efforts is cloud. Cloud services are now imperative, and make a real difference in ensuring that important enterprise services can keep going in almost all scenarios.

However, one common problem that financial services, government agencies and businesses face when moving to cloud services is to ensure that ongoing services run well even as the organisation migrates to newer solutions. Adapting to current technological trends while eliminating the risks of breaking existing systems and interrupting current business operations is vital.

Any organisation would baulk at the prospect of migrating to a cloud environment in one massive move. Incremental modernisation allows them to continue running their mission-critical applications on their current infrastructure while adapting and building new cloud-native applications in parallel.

Organisations across both the private and public sectors have begun to alter their perception of migrating workloads and applications to the cloud. Beyond a doubt, making a shift from a legacy to a managed cloud infrastructure is daunting on many levels. Discarding proprietary technology accumulated over the years can hold organisations back from making the move. Concern over data latency and volumes linger, especially when it comes to streaming data using the public cloud. Having the right people, processes and systems is a serious consideration. Combined with the cost of technology, infrastructure and reorganisation, these can give good reasons for pause.

The need of the hour is for these organisations to see a reduction in infrastructure cost, the ability to scale up and support a several-fold increase in traffic, reduced time to deploy and a simplified production rollout and recovery process. Enterprises need to have a rapid roll-out of digital capabilities by improving overall time-to-market and reducing the total cost of ownership. A great solution that can ease transformation, is to use container-based technology to develop, build, package and deploy applications and business solutions in a more efficient, secure and scalable way. Cloud-native solutions contribute towards a reduction in long-term operations costs, better system resilience, more efficient processes and enhanced security.

This begs the question: Do organisations have the capability to support cloud-native solutions to enable holistic improvement of infrastructure, to enhance the efficiency, scalability and security of their operations?

The OpenGovLive! Virtual Breakfast Insight held on 24 November 2021 aimed to help delegates understand ways to overcome the barriers to successful cloud migration and modernise infrastructure and application delivery to better serve the citizens and customers.

Embracing the inevitability of a hybrid cloud reality

Mohit Sagar, Group Managing Director and Editor-in-Chief, OpenGov Asia, kicked off the session with his opening address.

The pandemic has vaulted the governments and businesses headfirst into the next stage of digital transformation and online services. In the region, the Singapore government has taken the lead with the investment in public cloud estimated to amount to US$ 3.6 billion by 2023 and 70% of eligible government systems to be on the commercial cloud by 2023.

There is a need to rethink cloud strategy, Mohit asserts. There are too many legacy systems and organisations cannot afford to hide behind those systems anymore.

Currently, citizen happiness is the most important benchmark for governments and enterprises because it is about the uptake of the technology, Mohit contends. Acknowledging that the technology is here to stay, there is a need to look into upskilling the workforce. When planning, he says, “Technology has to be seen as an investment and not an expense.”

What cloud offers is the flexibility to rapidly respond to the changes demanded by digitally-savvy citizens. Agencies now have the capability to not only move workloads between on-premises data centres and public cloud but also make a change and upload data instantly.

Agencies that embraced cloud services proved more responsive and were able to continue operating remotely and serving their citizens, demonstrating agility, scalability and speed even amid a pandemic.

Against such a backdrop, organisations must boldly accept the new digital reality. They must harness technology to enhance the working experience and drive organisation goals in the new normal. And there are a lot of solutions available now. Mohit acknowledges. Global companies have been looking into the design of high-performance computing solutions that will tackle some of the world’s toughest challenges.

At the same time, navigating this shifting terrain must be done securely – there is a need to bake security into the process and tools. This means that security is readily built into the infrastructure across workloads and applications. Compliance and regulation are also an intrinsic part of creating a safe environment. Policy and guidelines are established to provide accountability and build trust with citizens and consumers alike.

But neither security nor compliance concerns are a reason to not transform. “Do not hide behind safety or governance,” Mohit cautions. “These issues should not deter people from embracing technology – they need to be confronted, not avoided.”

Ultimately cloud is here to stay. Mohit concludes. Organisations can get a head start now or play the more tenuous game of catch up later.

Firmly convinced that the transformation need not be done alone, he urges delegates to partner with organisations with the expertise to facilitate digital transformation. The process needs to be done at scale and with speed. The right partners bring a wealth of expertise and experience that will make the journey far easier to manage and navigate.

Exploring international use cases of hybrid cloud platforms

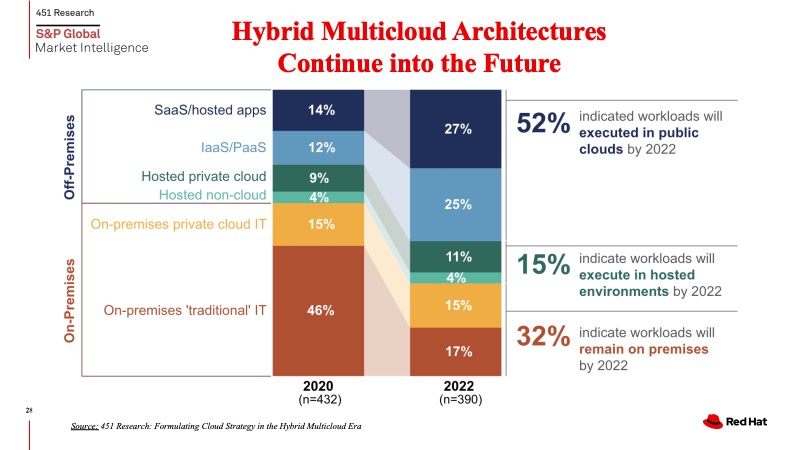

An Nguyen, Director, Cloud Solutions, Red Hat spoke next on trends in hybrid cloud adoptions.

An observes that there is a general shift in enterprises moving to public cloud and even data centres have begun moving to the public cloud. Nonetheless, he also notes that the use of on-premise private cloud infrastructure is still significant, pointing to the inevitable shift to a hybrid cloud model.

An emphasised the need to be adaptive. For An, the added benefit of using hybrid cloud is the ability to offer better customer services through quicker feedback from consumers. Cloud providers can monitor and keep everything updated for the organisations. Apart from that, public cloud infrastructure providers can also give you the most complete view of what you are using.

To become agile, cloud is an essential component. While the implementation and focus of hybrid cloud may not be easy initially, An contends that there are tremendous benefits to be reaped. This includes improved security, application or data portability, automation and orchestration, ease of management or operations, ease of implementation or deployment and architectural consistency.

Leading companies have demonstrated the possible use cases and the solutions that Red Hat offers depending on the organisation’s needs. The company has done work across a wide range of sectors, and banking has been particularly active.

An shared that approximately 2000 customers are using Openshift for mission-critical systems around the world. Red Hat possesses a full stack container platform and the operating systems to support applications to deliver the best business impact.

For Deutsche Bank, the impetus to implement multi-cloud technology stems from the desire to standardise and unify the platform of the myriad of applications. Their journey first began with the standardisation of the operating system followed by optimisation through the Openshift container platforms. Since Openshift serves all kinds of container workload, it helped to streamline processes in Deutsche Bank and enable automation.

Red Hat also provided support and expertise to Amadeus, on how they can move towards cloud-native workloads and navigate the complexities of their unique situation. In another instance, BMW needed help with expansion into new markets without investing in building data centres. To do that, they needed standardisation to move the workload from one country to another seamlessly. By adopting Openshift Dedicated, BMW could connect devices across different public cloud providers.

An emphasises that Red Hat’s OpenShift is the industry’s leading enterprise Kubernetes application development platform, helping customers deliver new customer experiences, open new lines of business, and modernise their existing application portfolio. He encouraged delegates to reach out to him should they have any queries on how the hybrid cloud model.

Peering into a digital future: Taking pre-emptive steps to stay ahead of the game

John Baddiley, Head of Strategic Relationships, Bank of New Zealand, shared BNZ approached the move to hybrid cloud and elaborated on the journey thus far.

BNZ has been around for 160 years and employs over 5000 staff all over the country. They have a strong focus on customer outcomes and experience, which has been reflected in the awards that they have been recognised for over the past few years.

John says that long history has many benefits, but can be a potential roadblock when it comes to digital transformation: something that has worked in the past it can be difficult to let go of it. However, BNZ has taken the step to change, modernise and transform its operations.

Cloud adoption is a key part of their transformation story. Rapid change, innovation and adoption mean that BNZ customers expect more every day. “Approaches to technology that worked five years ago will not work today,” he believes.

Monolithic solutions do not provide the flexibility required to adapt or provide the resilience of an ‘always on’ world. He is convinced that systems need to be able to change rapidly and securely to meet the expectations of customers, regulators, and shareholders. At these crossroads, technology can be a strategic advantage or a strategic inhibitor.

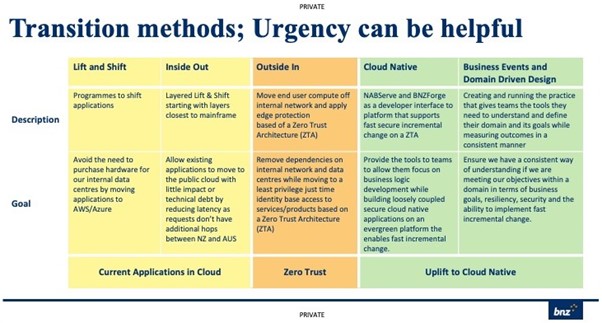

The desire to adopt cloud was a strong diver for BNZ when they first embarked on the journey. He shared that the various intent and goals BNZ had in mind led them to take different strategies in the hybrid cloud transformation depending on the application and needs.

A strategy was to refactor applications such that they are cloud-native. They had to bite the bullet to lift and shift some applications from on-prem to cloud environments because the previous best practices are no longer ideal.

One approach was to lift and shift applications that were closest to the mainframe. Another strategy was the ‘Outside In’ one, where the customer or banker facing applications were brought into a zero trust architecture. The third approach was to build applications that are cloud-native using their engineering foundations to deliver and consume cloud services.

However, John cautions that several dependencies must be addressed and operationalised before shifting or building applications on the cloud. These include:

- Engineering Platforms including integration, deployment pipelines, monitoring and management.

- Cloud skills and experience will be required. Choose a model (uplift, capability enhance, outsource) that is right for the workload.

- Connectivity to and between the cloud environment(s) from legacy data centres

- Finance and Cloud Accounting capabilities to ensure that business units have visibility of current and forecast spend

- Patterns for cloud-native software architecture and application transition must be developed, shared, and enforced

- Security standards and patterns must be defined and deployable

Speaking from experience, John says that organisations embarking on this journey need to learn to manage risk during transformation. BNZ has built a cloud governance ecosystem that integrates all aspects of governance, risk management and regulatory compliance. Producing an ecosystem that helps to manage risk rather than having only one party.

Apart from that, John stresses the importance of engaging with regulators to give them confidence that the shift of the workload to the cloud is robust.

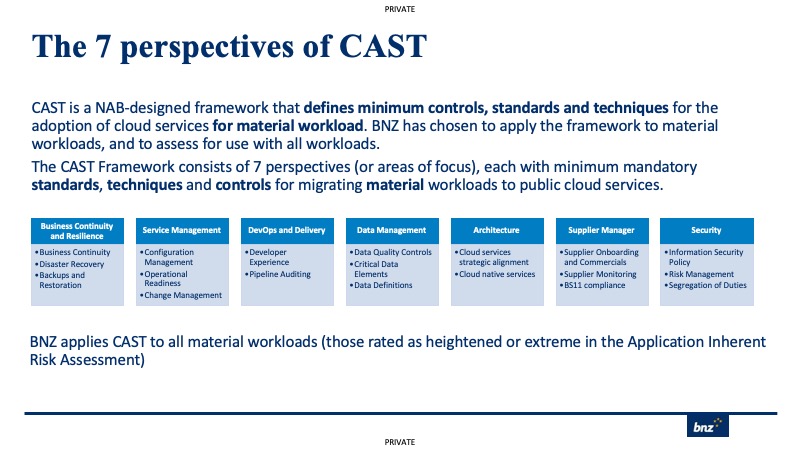

To that end, BNZ produced the 7 perspectives of CAST, which is a NAB-designed framework that defines minimum controls, standards and techniques for the adoption of cloud services for the material workload.

BNZ has chosen to apply the framework to material workloads and to assess for use with all workloads. The CAST Framework consists of 7 perspectives (or areas of focus), each with minimum mandatory standards, techniques, and controls for migrating material workloads to public cloud services.

BNZ applies CAST to all material workloads (those rated as heightened or extreme in the Application Inherent Risk Assessment).

In closing, John highlights that multi-cloud treatment varies by business significantly. He stresses that the most critical processes and applications must be designed to migrate quickly if required.

Being able to stay agile, nimble and relevant is the ultimate key to surviving in a rapidly changing world.

Interactive Discussion

After the informative presentations, delegates participated in interactive discussions facilitated by polling questions. This activity is designed to provide live-audience interaction, promote engagement, hear real-life experiences, and facilitate discussions that impart professional learning and development for participants.

One being asked about their organisation’s biggest challenge in digital transformation strategy, delegates were evenly split between culture (48%) and skills (48%).

A delegate opined that culture is the biggest challenge because digital transformation requires a different way of working, understanding finances and budgeting.

Asked which elements of transformation are the most challenging in their organisation, half of the delegates felt that (IT/Software) architecture and development (50%) was the most challenging element. About 405 thought leadership was an issue while 5% felt IT) operations was of concern.

A delegate said they had difficulty in bringing legacy products onto cloud-native platforms within the healthcare industry.

Mohit agrees that the journey is not an easy one; however, it is an inevitable one. He reminds delegates that this is where experts can help. The technologies used in 2020 and 2021 were “band-aid technologies.” Organisations need to prepare themselves to be ready for the next hit. Digital transformation is not a strategy but something that needs to be deployed. Security and privacy cannot be a stumbling block on that journey, Mohit cautions them.

The next question inquired on the percentage of workloads delegates see themselves moving to cloud over the next 3 years. Just under half (42%) indicated that more than 50% would be moved to cloud, followed by 30% – 50% of the workload (37%) and 10% – 30% of the work (21%).

Mohit firmly believes that a cloud-first policy is necessary because it is possible to have both on-prem and cloud services. Critical data can be kept on-prem but others can go into the new environment.

John adds that it is a question of capacity and finance. The selection of applications and data that go on cloud is a matter of how much work is associated with shifting each application and the maintenance to ensure that everything is working. For BNZ, John estimated that 80% of the workload will run on cloud eventually and all new applications are cloud-native.

When asked about how John manages the compliance and regulators, John explained that CAST helped to accelerate shifting the workload to the cloud in demonstrating the measures that are in place.

Another delegate wanted to know how critical it is to have a mature strategy or process like CAST before migrating critical applications to cloud. John says it depends on the organisation’s risk appetite and how much regulators care about how organisations run workloads. He feels that every organisation needs some form of risk control framework but that it does not necessarily need to be as comprehensive as CAST.

It is also not about selecting one total cloud, John opines. When choosing to deploy individual applications, organisations need to understand the capacity of teams and as well as the suitability of the features of the cloud.

John adds that it is important to examine the dependencies and then shift those that did not have dependencies. By shifting things to cloud, infrastructure gets taken care of and affords people more time to deliver value and focus on things that matter to the business.

On the most important outcome they are seeking in their digital transformation, delegates were equally split between the reliability of newly deployed changes (26%) and innovative platform and culture to support new ideas (26%). Similarly, better security and governance models got 16% as di operational efficiency (16%). The remaining delegates voted for reducing the cost of operations (11%) and the speed of developing and deploying changes (5%).

Polled about their top consideration in adopting / choosing multi-cloud, most delegates selected inter-communication and workload portability among the clouds (28%). This was followed by an even split between tools and services available on the new cloud (17%) and data sovereignty and residency (17%). The remaining delegates were equally divided in a three-way split between support within multi-clouds (11%), cost optimisation (11%) and complexity of migrating existing apps (11%). The remaining delegates (5%) chose the availability of skill set to navigate the new cloud as the top consideration.

The final question asked what the biggest benefit that Edge Computing brings to their organisation as part of their digital transformation strategy. About a third (35%) indicated that Fast-to-Adopt IoT Solutions was the biggest benefit, was followed by a quarter (25%) who opted for fast, affordable networks at the edge (25%). The remaining votes went with hardware-based security leadership (15%) and AI and computer vision expertise (5%).

Asked to share more on how BNZ baked security and compliance enforcement into the cloud deployments, John explained that BNZ has CSAMs for every cloud service, which defines how the service must be configured for each use case. On top of that, they use an attestation process with CAST to make sure that they have checks to ensure that implementation teams are following the architecture and policies correctly.

BNZ is working towards embedding as many of the CAST checks into pipelines, although this is at a very early stage. He added that they are also building up patterns to enable Zero Trust Architectures, which will help bake in the infrastructure aspects of security to our solutions.

Apart from that, John revealed that they run a secure code warrior programme, which teaches code security practices to all of their developers. He emphasised that it is important to remember that security is everyone’s responsibility, not just the security team.

Conclusion

In closing, Guan Hao, Industry Technical Specialist, Intel, thanked all the delegates for their participation and insights on the topic.

He reiterated that applications and data are growing and organisations will need an infrastructure that can handle the load. With the changing reality that the world is in currently, he stressed the importance of employing the right technologies to help to lubricate the process of digital transformation. To that end, cloud is the cornerstone of digital transformation.

On top of that, organisations will need flexible infrastructure to handle the demands of storage, network and multiple cloud platforms.

Finally, An recapped the many use cases for hybrid cloud that delegates need to understand to be able to identify their unique journey. He urged the delegates to consider the intercloud connection and make sure that architecture is cloud-native.

He invited delegates to reach out to him and the team if they had queries or wanted to understand the unique value that hybrid cloud can bring to their organisations.