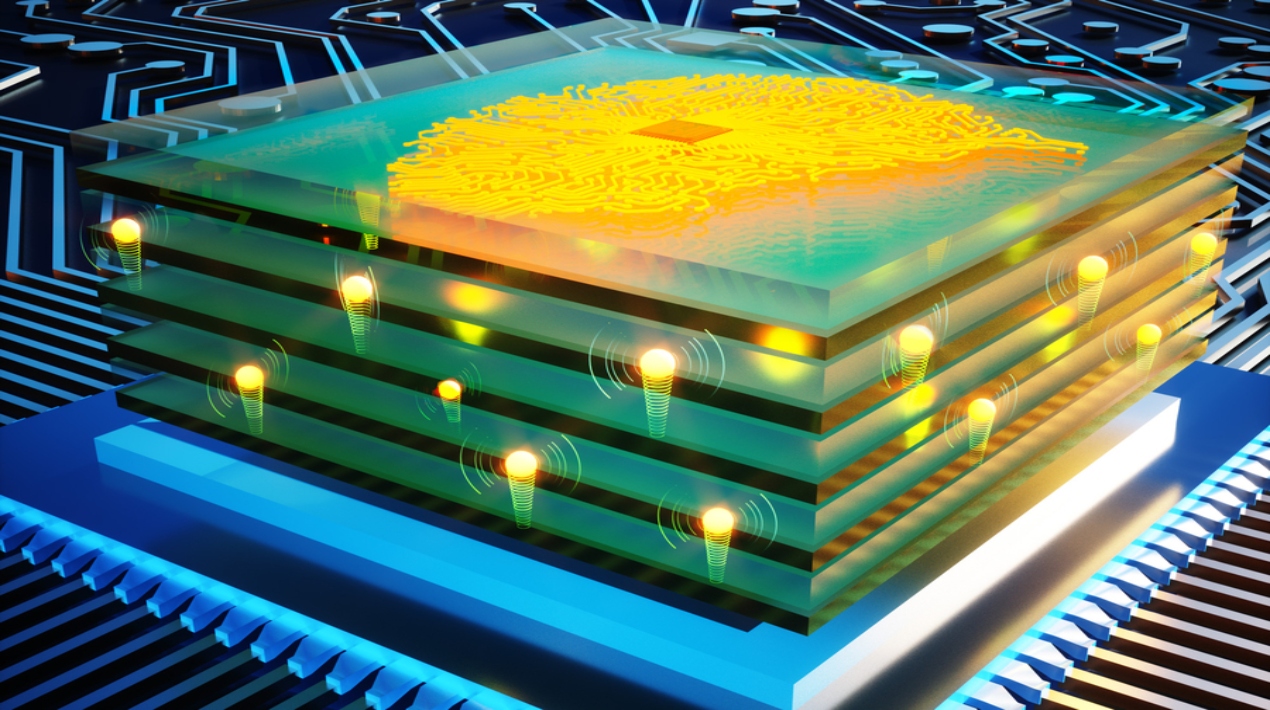

Programmable resistors are essential building blocks in analogue deep learning, just as transistors are in digital processors. Researchers can create a network of analogue artificial “neurons” and “synapses” that execute computations just like a digital neural network by repeating arrays of programmable resistors in complex layers. After that, the network can be trained to perform complex AI tasks such as image recognition and natural language processing.

The goal of a diverse research team from the Massachusetts Institute of Technology (MIT) was to increase the speed of a particular kind of artificial analogue synapse that they had previously created. They used a useful inorganic substance in the manufacturing process to give their devices a million-fold speed boost over previous iterations, which is roughly a million times faster than human synapses.

This inorganic component also contributes to the resistor’s high energy efficiency. Unlike the materials used in their earlier iteration of the device, the new material is compatible with silicon fabrication techniques. This improvement has enabled the fabrication of nanometer-scale devices, which may pave the way for their inclusion into commercial computing hardware for deep-learning applications.

These programmable resistors greatly accelerate neural network training while also significantly lowering the cost and energy required. This might speed up the process through which researchers create deep learning models that can be used for things like fraud detection, self-driving cars, or picture analysis in medicine.

The reasons why analogue deep learning is quicker and more energy-efficient than digital deep learning are: because computation is done in memory rather than a processor, and massive amounts of data are not constantly transported between the two. Parallel processes are also carried out by analogue processors that doesn’t require more time to finish new operations as the size of the matrix increases because all computation happens at once

On the other hand, a protonic programmable resistor is the essential component of the new analogue processor technology developed by MIT. These arrays of nanoscale-sized resistors are arranged like pieces on a chessboard. One billionth of a metre is a nanometer.

Learning occurs in the human brain because of the strengthening and weakening of synapses, the connections between neurons. This approach, where the network weights are programmed by training algorithms, has been used by deep neural networks for a long time. In the instance of this novel processor, analogue machine learning is made possible by varying the electrical conductivity of protonic resistors.

The motion of the protons governs the conductance. To change the conductance of a resistor, extra protons are either pushed into the channel or taken out. An electrolyte (like the one in a battery) that conducts protons but stops electrons is used to achieve this.

Moreover, the resistor may run for millions of cycles because protons don’t destroy the substance. This new electrolyte enabled a programmable protonic resistor that is a million times faster than their previous device and can function at ambient temperature. Thanks to PSG’s insulating qualities, protons flow with almost no electric current. It’s quite energy efficient.

The researchers want to re-engineer these programmable resistors for high-volume manufacturing, then examine resistor array qualities and scale them up so they may be incorporated into systems. They want to study materials to reduce bottlenecks that limit the voltage needed to transfer protons to and from the electrolyte.