Fog, mist, cloudlets. These meteorological terms are gaining

popularity in the world of computing. They are meant to complement centralised cloud

computing in the context of the Internet-of-Things (IoT).

IoT devices generate unprecedented enormous volumes of data

and it is challenging to transmit all the data back to the cloud for

processing. With increasing need for smart, end-user IoT devices and near-user

edge devices to carry out a substantial amount of data processing with minimal

computing, fog computing offers a way to decentralise applications, management,

and data analytics into the network itself using a distributed and federated

compute model.

At the moment, no consensus exists on distinction among fog

computing, mist computing, cloudlets, or edge computing. A recently

released document

from the U.S. Commerce Department’s National Institute of Standards and

Technology (NIST) strives to provide a definition that can be used by

practitioners and researchers to facilitate meaningful conversations.

The document provides the conceptual model of fog computing

and its subsidiary mist computing, and aims to place these concepts in relation

to cloud computing and edge computing.

The document also lists important aspects of fog computing

and is intended to serve as a means for broad comparisons of fog computing

capabilities, service models and deployment strategies.

Defining fog

computing

Fog computing is defined by NIST as ‘a layered model for

enabling ubiquitous access to a shared continuum of scalable computing

resources’.

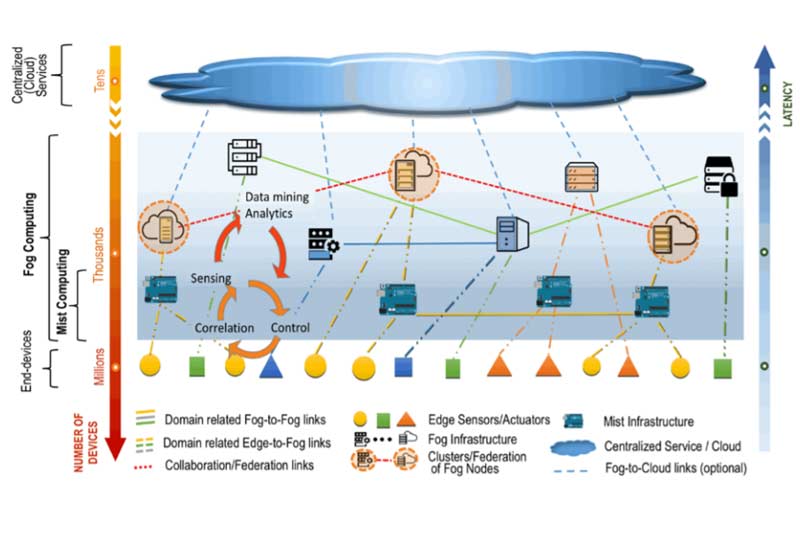

According to NIST, fog computing facilitates the deployment

of distributed, latency-aware applications and services, and consists of physical

or virtual fog nodes residing between smart end-devices and centralised cloud

services.

Differentiating between edge and fog computing

The document defines edge computing as the network layer

encompassing the end-devices and their users, to provide, for example, local

computing capability on a sensor, metering or some other devices that are

network-accessible. This is the IoT network itself. While edge computing runs

specific applications in a fixed logic location and provides a direct

transmission service, while fog computing runs applications in a multi-layer

architecture that decouples and meshes the hardware and software functions,

allowing for dynamic reconfigurations for different applications while

performing intelligent computing and transmission services. Moreover, in

addition to computation, and networking, fog computing also addresses storage,

control and data-processing acceleration.

Fog Nodes – the core

component of the fog computing architecture

According to the NIST document, fog nodes are either

physical components such as gateways, switches, routers, servers, etc or

virtual components like virtualized switches, virtual machines, cloudlets [1] etc that are tightly coupled with

the smart end-devices or access networks, and provide computing resources to

these devices.

A fog node is aware of its geographical distribution and

logical location within the context of its cluster. Fog nodes are often

co-located with the smart end-devices, resulting faster analysis and response

to data generated by these devices compared to a centralised cloud service or

data center. They provide some form of data management and communication

services between the network’s edge layer where end-devices reside, and the fog

computing service or the centralised (cloud) computing resources, when needed. The

nodes can operate in centralised or decentralised manner and can be configured

as stand-alone fog nodes that communicate among them to deliver the service. Or

they can be federated to form clusters that provide horizontal scalability over

disperse geolocations.

Fog computing minimises the request-response time from/to

supported applications, and provides, for the end-devices, local computing

resources and, when needed, network connectivity to centralized services.

Six essential

characteristics of fog computing

- Contextual location

awareness, and low latency: Fog computing offers the lowest-possible

latency due to the fog nodes’ awareness of their logical location in the

context of the entire sytems and of the latency costs for communicating with

other nodes. - Geographical

distribution: The services and applications targeted by the fog computing

demand widely, but geographically-identifiable, distributed deployments. An

example would be the delivery of high quality streaming services to moving

vehicles, through proxies and access points geographically positioned along

highways and tracks. - Heterogeneity:

Fog computing supports collection and processing of data of different form

factors acquired through multiple types of network communication capabilities. - Interoperability and

federation: Fog computing components must be able to interoperate, and

services must be federated across domains. - Real-time

interactions: Fog computing applications involve real-time interactions

rather than batch processing. - Scalability and

agility of federated, fog-node clusters: Fog computing is adaptive in

nature, at cluster or cluster-of-clusters level, supporting elastic compute,

resource pooling, data-load changes, and network condition variations.

Similar to cloud computing deployment models, fog node

deployment could be private (for exclusive use by a single organisation

comprising multiple consumers), community (use by a specific community of

consumers from organisations that have shared concerns), public (provisioned

for open use by the general public), hybrid (composition of private, community

or public nodes that remain unique entities, but are bound together).

Mist computing

NIST defines mist computing as a lightweight and rudimentary

form of fog computing that resides at the edge of the network fabric, bringing

the fog computing layer closer to the smart end-devices. Mist computing uses

microcomputers and microcontrollers to feed into fog computing nodes and

potentially onward towards the centralised (cloud) computing services. It is

not a mandatory layer of fog computing.

Read the document here.

[1] According to

Wikipedia, a cloudlet is a mobility-enhanced small-scale cloud datacenter that

is located at the edge of the Internet.