Epidemiological models have difficulty predicting cases rates throughout the COVID-19 pandemic. A new study by mathematicians from Brown University uses an advanced machine learning technique to explore the strengths and weaknesses of commonly used models and suggests ways of making them more predictive.

There is an old saying in the modelling field that ‘all models are wrong, but some are useful. What we show here is that the major COVID-19 models were wrong and also not very useful — at least in terms of predicting the course of the pandemic. There was a lot of Monday-morning quarterbacking, but not a lot of accurate predictions.

– George Karniadakis, Professor, Applied Mathematics, Brown University

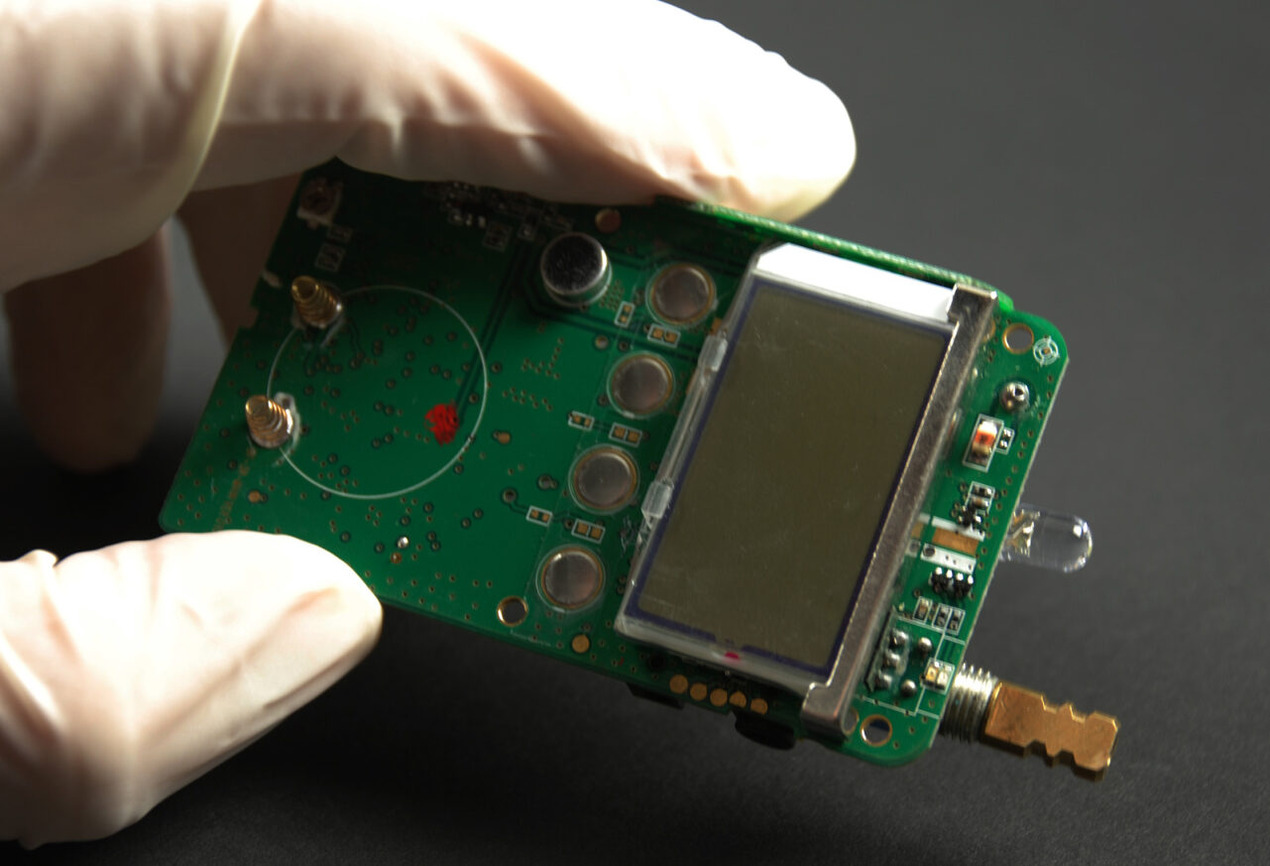

To find out why that was, the team looked at nine prominent COVID-19 models, all of which were some variation of the “susceptible-infectious-removed” or SIR model. These models divide a population into separate bins: those who have not yet been infected (susceptible), those who are infected and could spread the virus to others (infectious) and those who have had the infection and can no longer spread it (removed). More complicated versions of the SIR model include additional bins that capture rates of quarantine, hospitalisation, deaths and other quantities that could influence the spread of the virus.

Several factors affect the movement of individuals from one bin to another. Movement from “susceptible” to “infectious,” for example, depends on how efficiently the virus jumps from person to person along with how often people come in close contact with each other. Many of these factors cannot be observed directly, and so the models must infer their values from available data. In modelling terms, these factors are known as parameters.

The study found that a major downfall of COVID-19 models was that they treated key parameter values as being fixed over time, despite the fact that these factors shifted dramatically in the real world. For example, the community transmission rate of the virus varied widely depending upon mask use, business closings and re-openings, and other measures.

Hospitalisation rates changed over time as the availability of hospital beds shifted. And the death rate changed with new treatments. All of these evolving factors changed the trajectory of case rates and deaths, but prominent models held these parameters steady in time, which led to poor predictions, the researchers found.

The next question was whether there might be a way to capture these changing parameters in epidemiological models. To do that, the team used physics-informed neural networks (PINNs) — a machine learning technique developed at Brown. PINNs are neural networks similar to those used to recognise images or transcribe speech to text.

But unlike standard neural networks, PINNs are equipped with equations describing the physical laws that govern a system. The team first used PINNs to discover velocities and pressures of fluid flows from images and videos. In those cases, PINNs were equipped with equations used in fluid dynamics. In this case, the team equipped the PINNs with equations used to calculate how pathogens spread.

Considering the fact that pandemics evolve in time and there is a continuous collection of data, PINNs can be retrained as new data is collected and update the models over time with inferred parameters. The computational time needed for re-training PINNs with new data is relatively short compared to the time-scale of pandemic evolution.

The findings suggest that while no model can accurately capture all the dynamics that play out during an extended pandemic, models with the ability to adjust key parameters on the fly could make for more useful predictions.