Big data is driving fundamental transformation across all

industries and sectors, and finance is no exception. With the proliferation of

mobile devices and rapidly increasing share of digital transactions, financial

institutions are striving to make sense of the massive volumes of data being

generated and captured to understand their customers and predict their needs, so

as to serve them better. Around the globe, they are also under increasing

regulatory pressure to improve risk reporting and controlling money laundering

and fraud.

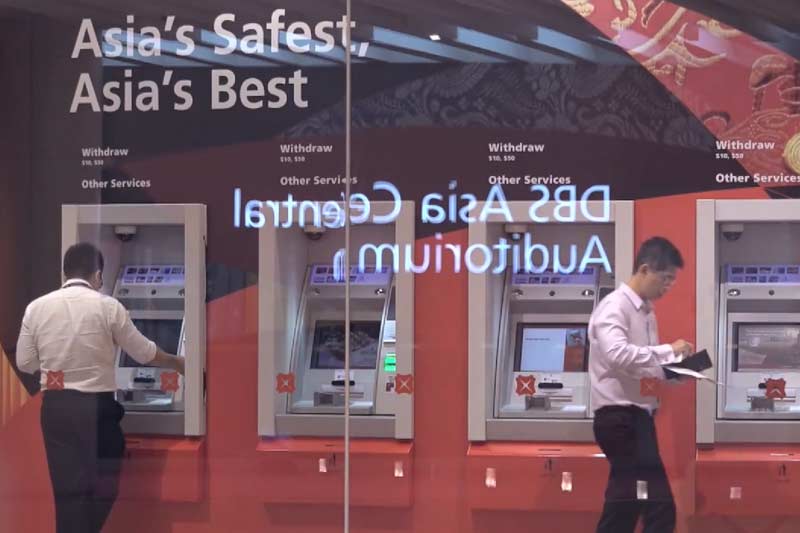

As a leading bank in Asia, DBS encounters these

issues and hence, it started moving towards becoming a data-driven organisation

a few years ago, taking smart decisions based on data and not instincts.

However, the company’s traditional technology stack for

supporting advanced analytics was expensive to scale and not flexible enough to

support this work.

With Cloudera as a

partner, DBS built

a central data team and enterprise data hub, enabling DBS to scale out more

economically, and experiment more. The agility of the platform allows the bank

to explore use cases and iterate easily and quickly, without the need to worry

about ROI and build an investment case beforehand.

With the ability to more easily store and analyse billions

of events in a modern data platform, DBS can answer questions before they’re

asked to more effectively engage customers and deliver better service.

This has enabled DBS staff to experiment more and be on the

forefront of innovation when it comes to understanding the customer experience

and applying human-centered design to its services.

For instance, machine learning can be used to understand customer

sentiments. All calls to the bank’s call centres are recorded. They can be

converted to text and then machine learning algorithms can be used on the

analytics platform to understand sentiment. Problems can be flagged so that the

bank can reach out to the customers.

Ultimately behavioural information and machine learning, in

combination with biometrics, could even enable ‘invisible authentication’,

where a customer no longer needs to provide many supporting documents or use a

physical device for transactions or answer questions like, ‘What is your

mother’s maiden name’.

In a video interview

with Wee Wu Neo from The Neo Dimension, David Gledhill, Head, Group

Technology and Operations, DBS explained that the use of data goes beyond to

customers. The transformation to a data-driven organization has significantly

improved operations across the organisation.

Data can be used to find out where fraud is happening in the

company. To take a specific use case of this type, trade financing is highly

prone to fraud. To deal with this, DBS started looking at data other than

invoices and transactions to predict the possibility of fraud.

“You look at things like ship movements. If you know the

typical movement patterns of goods from one port to another, then anomalous

goods movement or timing that doesn’t look like typical timing for that type of

transaction or a behavioural shift in importers or exporters or in warehousing,

signals where potentially fraudulent trade might be going on,” Mr Gledhill said.

Data analytics can predict the likelihood of a relationship

manager quitting within the next three months, so that HR staff can intervene early

to retain employees. Data can tell the audit department which branch might have

issues and should be audited next.

Operational staff can understand and predict customer flows,

ATM load, and call centre volumes using data. In fact, one of the first big

data projects DBS embarked upon was figuring out the sequence in which ATMs

should be filled. The bank went from hundreds of instances of ATMs running out

of cash to single digit numbers.

The bank also moved its financial risk information and data

required for regulatory reporting on to the Cloudera platform to simplify

reporting.

Mr Gledhill said, “We’ve applied it to a whole range of

different use cases and every single one, we see a massive uplift in terms of

the base case that we normally do.”

This has also been aided by the huge active worldwide community

of Hadoop contributors. It includes not just individuals but also tech giants,

such as Netflix, Amazon and Facebook (the platform itself was inspired by

technologies created inside Google). So, the platform keeps evolving and

improving steadily and DBS can build on the contributions made by this vibrant

community.

DBS wanted to make the data analytics capabilities available

to everyone in the bank, as opposed to having a separate team of data scientists

or little pockets of analytics.

However, the oft-repeated cliché of technology being easy

and the ‘people’ aspect being hard was true.

The more difficult part was opening up people’s minds to the

possibilities. The first few use cases played a key role in overcoming

scepticism. They generated a high level of interest and enthusiasm among

different teams within the bank. They began to explore how they could leverage

analytics in their area.

All this improvement in services and operational efficiency

has been achieved while reducing costs.

Mr Gledhill said, “We’ve seen anything in the region of 80%

reduction in operating cost in a much shorter build time. The real big benefit

lift though is the benefit it provides to the business. If you look at our

digitally engaged customers, we see material lift in how much revenue a digital

customer brings to the bank.”

This is an ongoing journey and DBS expects Cloudera to help

them continue along the path towards deeper, better insights.

Content from Cloudera customer success

story on www.cloudera.com and video

interview at The Neo Dimension.