Given

technological advancements and the rapid proliferation of Internet of Things

(IoT), our world is increasingly interconnected. Governments and businesses

across the globe also seek to leverage technology to improve their products and

services to citizens and customers. While digital technologies present new

opportunities and transform the way we live and work, the digital disruption

also brings out new challenges, particularly in cybersecurity.

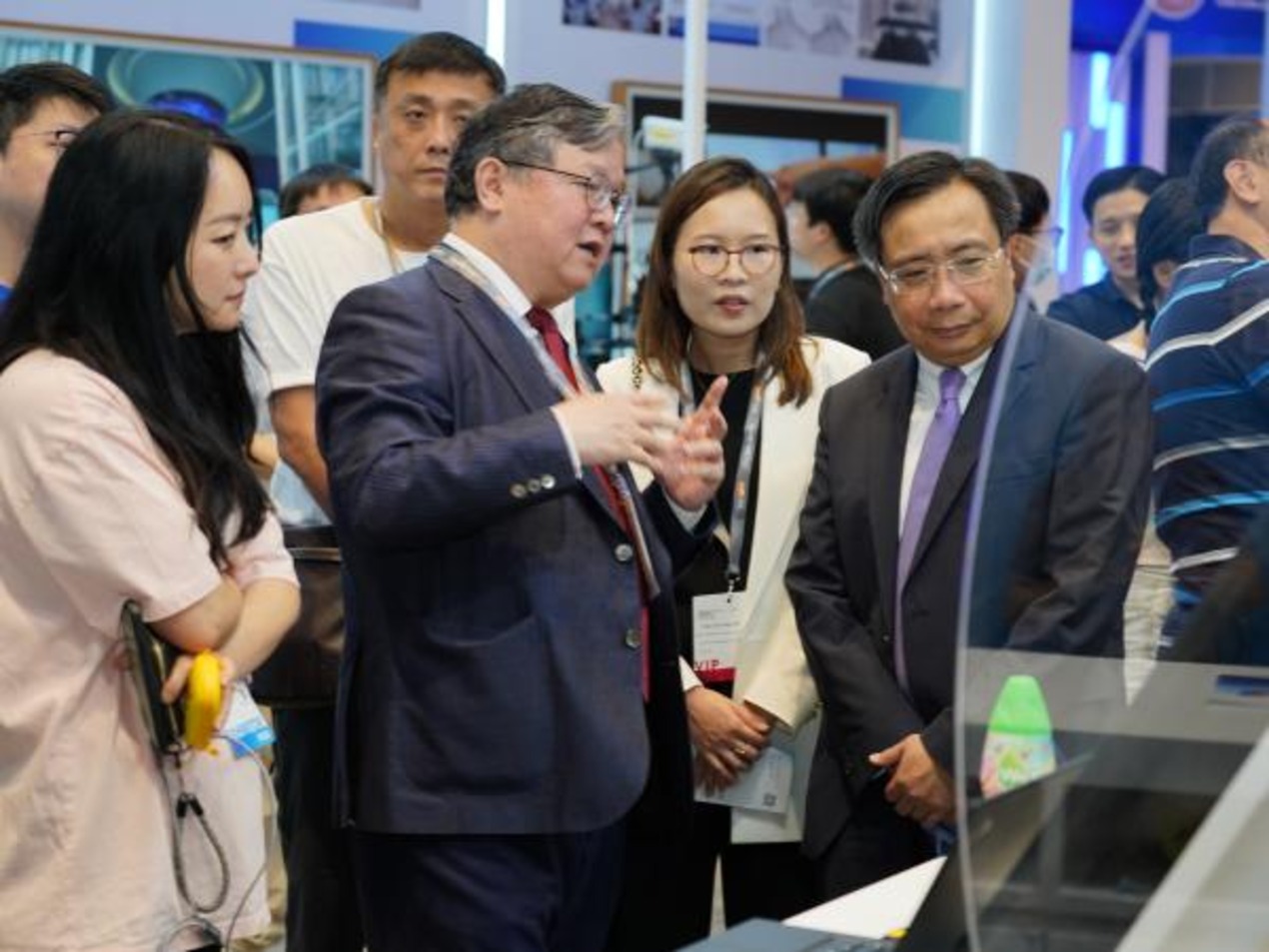

Recently,

OpenGov had the privilege to speak to Mr Stephan Neumeier, Managing Director

of Kaspersky Lab Asia Pacific, on the fast-changing cybersecurity

landscape in the Asia Pacific region and how organisations can better prepare

themselves to deal with cybersecurity threats.

When asked

to comment on how the cybersecurity landscape has evolved and some of the

emerging trends, Mr Neumeier shared some of his observations that 2017 has seen

“the most intensive of cybersecurity incidents”.

“Unfortunately,

most of what our researchers at Kaspersky Lab has projected to happen were

brought to fruition — espionage has gone mobile, APTs attacked enterprise

networks, financial attacks continued, a new wave of ransomware attacks came

about, critical ICS processes were disrupted, poorly secured IoT devices were

targeted, and even information warfare figured last year,” he said.

This year,

he saw a continuation of these attacks and much more as the themes and trends

build on each other, year after year, expanding further the threat landscape

where individuals, businesses and governments are relentlessly pursued and attacked.

Cybersecurity

challenges in Asia Pacific

As

different markets may face different challenges, depending on the region’s

capabilities to tackle and mitigate cybersecurity threats. OpenGov asked Mr

Neumeier his views on whether the Asia Pacific region face similar or unique

challenges compare to the rest of the world.

According

to him, the Asia Pacific market is very different and unique from other regions

globally, especially from a cultural perspective.

“It is a

very young region with a very significant number of millennials growing up. Not

to mention that it consists of the two most populated countries in the world,

China and India. As internet is becoming a major part of our lives, these young

generations require access to fast internet. To cater to these need, massive

investments are being made by respective countries in the APAC to

infrastructure to improve internet speed, making sure that their country are

not left behind and to keep up with the growth of the technology space,” he

said.

“With

that, in the last few years, infrastructures within the APAC countries are

beginning to have almost similar qualities as countries in Europe such as

Switzerland and Germany. However, from a cybersecurity perspective, the

awareness and understanding are not in the same level as those in these matured

countries and this is a huge challenge for the APAC region. This is why, in

this market, we should focus more in education and awareness of cybersecurity.”

On the

level of cybersecurity awareness in the region, Mr Neumeier pointed out that

although the region has a large number of active users of the Internet, there

still appears to be a low awareness of cybersecurity among Internet users in

this region.

Unfortunately,

this low level of cybersecurity awareness combined with high Internet usage

means that the Internet users in the region have been the prime targets of

cyber threat attacks such as when the Naikon APT targeted top-level government

agencies and civil military organisations or when the Wannacry and Petya

ransomware outbreak began or when the Mirai malware unleashed DDoS attacks.

“Additionally,

bring your own device (BYOD) is the big trend affecting how businesses operate

online, with 72% of companies expecting to use the concept extensively in the

near future, according to a survey by B2B International on behalf of Kaspersky

Lab. It’s inevitable that in any company, small or large, many employees will

use personal devices to connect to the corporate network and access

confidential data. That’s why companies need to implement policies that

safeguard both corporate and personal mobile devices,” he said.

“As a

society, we need to find ways to raise awareness of the risks associated with

online activity and develop effective methods to minimize these risks. There’s

technology at the core of any solution to tackle cybersecurity. But it is most

important to incorporate the human dimension of security, so we can effectively

mitigate the risk,” he added.

“All it

takes is a single person to bring it all down”

On the

biggest cybersecurity threats organisations face today, Mr Neumeier highlighted

the human factor in IT security, naming it “most common security

vulnerability”.

He cited

a recent global study conducted by Kaspersky Lab on cybersecurity

awareness involving about 5,000 businesses, which showed that organisations are

at a very real threat from within. According to this study, careless or

uninformed employees account for about 52% as the top cause of data leakage in

organisations worldwide.

“Taking a

closer look at this study, it reveals that despite the rapid proliferation of

destructive and more complex malware or Trojans, organisations should be more

concerned about their most important asset – their people,” he said.

“You can

have the best technical means and the most thought-out security policy but it

is never enough to protect your organisation from cyberthreats. All it takes is

a single person to bring it all down,” he added.

However,

he also pointed out that in most case, it is unintentional because that one

employee is unaware of threats and doesn’t have the basic cybersecurity

knowledge. According to the cited study, an approximate 65% of

organisations now already invest in employee cybersecurity training to close

this loophole.

Data

breaches affect both large and small organisations, with average losses from

data breaches currently passing the $1 million mark, a significant jump over

the past two years.

“For

enterprises, the average cost of one incident from March 2017 to February 2018

has reached $1.23 million, which is 24% higher from 2016-2017. For the SMBs,

it’s an average of $120,000 per cyber incident, which only costs $32,000 more

than a year ago,” he shared.

Mr

Neumeier iterated that whether it is a massive cybersecurity incident or

small-scale one, about 80% of them point to having been caused by human error.

“More than

ever, cybersecurity awareness and education are now critical requirements for

organisations of any size that is faced with the prospect of falling prey to

cybercriminals. At this point, there is a definitive need for organisations

regardless of size for solutions that provide centralized security management

of networks combined with training that zeroes in on the ‘how’ part of the

equation.”

Importance

of an effective cybersecurity strategy

Organisations

need to develop an effective and all-round cybersecurity strategy to protect

its assets and interests. Mr Neumeier recommended a cyclical approach of

continuous monitoring and analytics in building an effective cybersecurity

strategy.

“Twenty

years in the industry has taught us that what makes the most sense for

enterprise IT infrastructure to have true cybersecurity is to put in place a

cyclical adaptive security framework. This would have to be a flexible,

proactive multi-layered protection infrastructure which dynamically adapts and

responds to the ever-changing threat landscape,” he said.

According

to him, Kaspersky Lab’s security architecture is based on a cycle of

activities, comprised of four key segments namely Prevent, Detect, Respond, and

Predict.

He

continued to explain, “At the core of Kaspersky Lab’s True Cybersecurity

is HuMachine Intelligence, a seamless fusion of Big Data-based Threat

Intelligence, Machine Learning and Human Expertise. We have designed it so

because we believe we’re in a never-ending arms race — IT threats are dramatically

evolving day in and day out and here we are totally focused on following the

trail of hackers and further refining our solutions so we stay ahead of them.

It’s a continuous process.”

Key

components of cybersecurity resilience

On how

threat intelligence and endpoint detection can protect organisations and boost

organisations’ ability to respond to threats, Mr Neumeier stated that targeted

attacks have become one of the fastest growing threats in 2017.

“It used

to be that organisations employ endpoint protection platforms (EPP) to control

known threats such as traditional malware or unknown viruses which might use a

new form of malware directed at endpoints. However, cybercrime techniques have

significantly evolved such that attack processes have become aggressive and

expansive in recent years,” said Mr Neumeier.

It is

alarming that the specifics of the targeted attacks that cybercriminals use,

and the technological limitations of traditional endpoint protection products

mean that a conventional cybersecurity approach is no longer sufficient.

The cost

of incidents associated with simple threats is negligible at US$10,000 compared

with an advanced persistent threat (APT) attack which would set an organisation

for about US$926,000.

“To

withstand targeted attacks and APT-level threats on endpoints, organisations

need to consider EPP with endpoint detection and response (EDR)

functionalities,” the expert said.

“EDR is a

cybersecurity technology that addresses the need for real-time monitoring, focusing

heavily on security analytics and incident response on corporate endpoints. It

delivers true end-to-end visibility into the activity of every endpoint in the

corporate infrastructure, managed from a single console, together with valuable

security intelligence for use by an IT security expert in further investigation

and response,” he explained.

According

to Mr Neumeier, an organisation needs an EDR if it is looking at a proactive

detection of new or unknown threats, previously unidentified infections

penetrating it directly through endpoints and servers. This is achieved by

analysing events in the grey zone, home of those objects or processes included

in neither the “trusted” nor the “definitely malicious” zone.

Depending

on each organisation’s maturity and experience in the field of security, and

the availability of necessary resources, some businesses will find it most

effective to use their own expertise for endpoint security but will take

advantage of outsourced resources for more complex aspects.

Meanwhile,

they can build up in-house expertise with skills training, through access to a

threat intelligence portal and APT intelligence reporting, and using threat

data feeds. Or — particularly attractive for overwhelmed or understaffed

security departments — they can adopt third-party professional services from

the outset.

Kaspersky

Lab’s approach to endpoint protection includes the following components:

Kaspersky Endpoint Security, Kaspersky Endpoint Detection and Response, and

Kaspersky Cybersecurity Services.

For

organisations unable, for reasons of regulatory compliance, to release or

transfer any corporate data outside their environment, or that require complete

infrastructure isolation, Kaspersky Private Security Network provides most of

the benefits of global cloud-based threat intelligence as provided by Kaspersky

Security Network (KSN,) without any data ever leaving the controlled perimeter.

To

counteract advanced threats and targeted attacks, businesses need automated

tools and services designed to complement each other and help security teams

prevent most attacks, detect unique new threats rapidly, handle live attacks,

respond to attacks in a timely manner, and predict future threats.

On

prevention as a key line of defence, Mr Neumeier gave the following suggestions

on measured that organisations can take to prevent cybersecurity incidents:

“We cannot

emphasise it enough – that preventing cybersecurity incidents from happening or

damaging our organisation’s finances or reputation, starts with raising

awareness and education.”

In this,

he urged organisations to strengthen the weakest links, toughen the target

systems and assets, and improve the effectiveness of current solutions to keep

up with the modern threats.

At the

same time, Mr Neumeier emphasised the importance for organisations to be well

equipped with threat intelligence.

“This is

moving from a reactive security model to a proactive security model based on

risk management, continuous monitoring, more informed incident response and

threat hunting capabilities,” he said.

“As we say

at Kaspersky Lab, prediction is doing more to guard against future

threats. Having access to cybersecurity experts that will keep organisations

updated on the constantly-changing global threat landscape and will help them

test their systems and existing defenses is a vital element to help them adapt

and keep pace with emerging security challenges”.

Tips on how

to keep up with the fast-changing cybersecurity landscape

As we face

increasing cybersecurity challenges, what can organisations and individuals do

to protect themselves?

For

organisations, Mr Neumeier spoke on the importance of having cybersecurity trainings

and adopting a cyclical approach to cybersecurity strategy.

“Based on

how we conduct our cybersecurity trainings, here are two quick tips: One, avoid

abstract information and focus on certain practical skills. Second, instruct

different groups of employees differently,” he shared.

“Educating

the staff on the motivations of security policies, the importance of working

safely and how to contribute to the security of their organisations can help

mitigate the risk of security incidents and safeguard what is truly important –

their data.”

He also

underscored the importance of having a new mindset in the face of new threats.

Here are some of the best practices he shared on how individuals can to be

risk-ready in the world of advanced attacks and epidemic outbreaks:

1. Remember the weakest link. Be aware and

knowledgeable about cybersecurity.

2. Invest in technology. Shift your focus towards a

proactive protection approach that goes beyond prevention; should be

adaptive, advanced, predictive and involve human expertise

3. Back up

4. Encrypt

5. Secure your network with a strong password.

“There

exists today a great deal of highly-motivated cybercriminals who will try to

find all points of vulnerability in an Internet user or within an organisation

just to get what they want. Most of the time, the road to remediation and

recovery is complicated and expensive, whether the victim is an individual or

an institution,” he concluded.